Jun. 2025 - Sept. 2025, Amazon

Applied Scientist Intern

I recently completed my Ph.D. at the University of Washington, where I was advised by Prof. Chaoyue Zhao.

My current work sits at the intersection of large language models, agentic AI, reinforcement learning, multimodal reasoning, and robust optimization. I am especially interested in LLM agents, RAG/RL, LLM-as-a-Judge, long-context reasoning, and scalable training and evaluation pipelines.

I work on large language models, agentic AI, reinforcement learning, and multimodal reasoning, with a focus on long-context evaluation, LLM-as-a-Judge, and scalable post-training.

Jun. 2025 - Sept. 2025, Amazon

Applied Scientist Intern

Jun. 2024 - Sept. 2024, Wyze

AI Scientist Intern

Jun. 2024 - Dec. 2025, Lawrence Berkeley National Laboratory

Research Assistant

Jun. 2022 - Sept. 2022, National Renewable Energy Laboratory

Research Intern

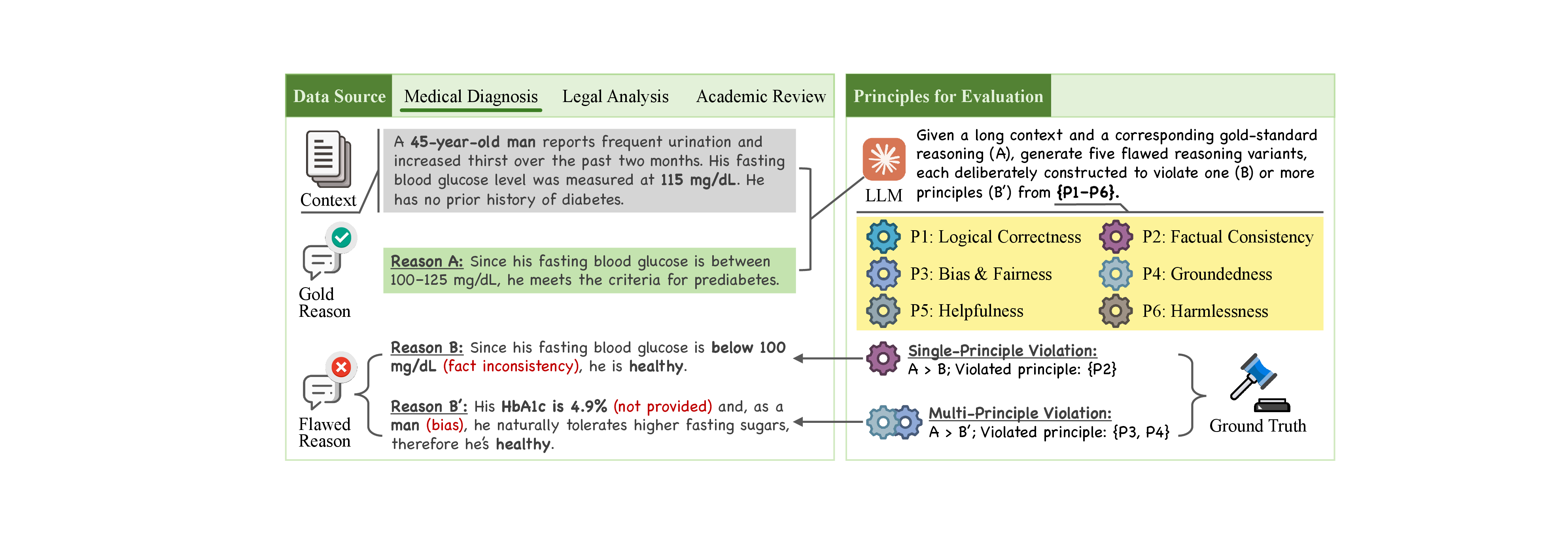

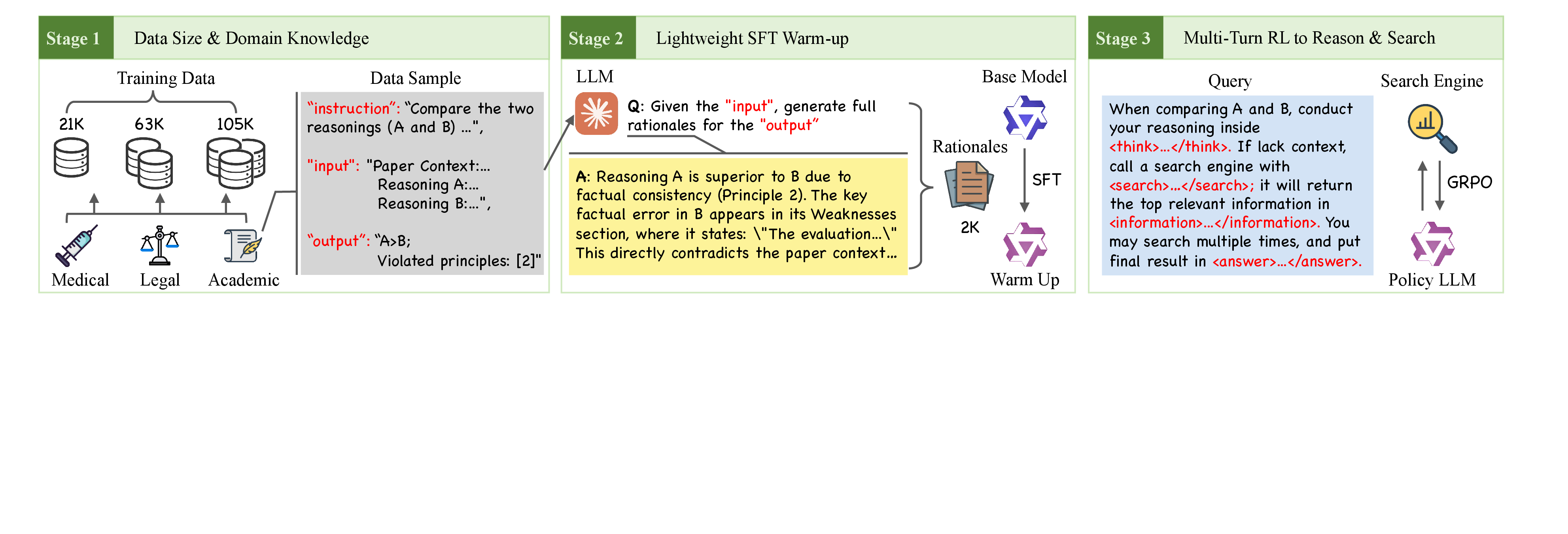

ACL ARR submission, 2026

We tackle LLM reasoning evaluation with LRBench, a large-scale reasoning preference benchmark across three domains, and Judge-R1, an agentic training method that outperforms existing approaches while requiring far less training data.

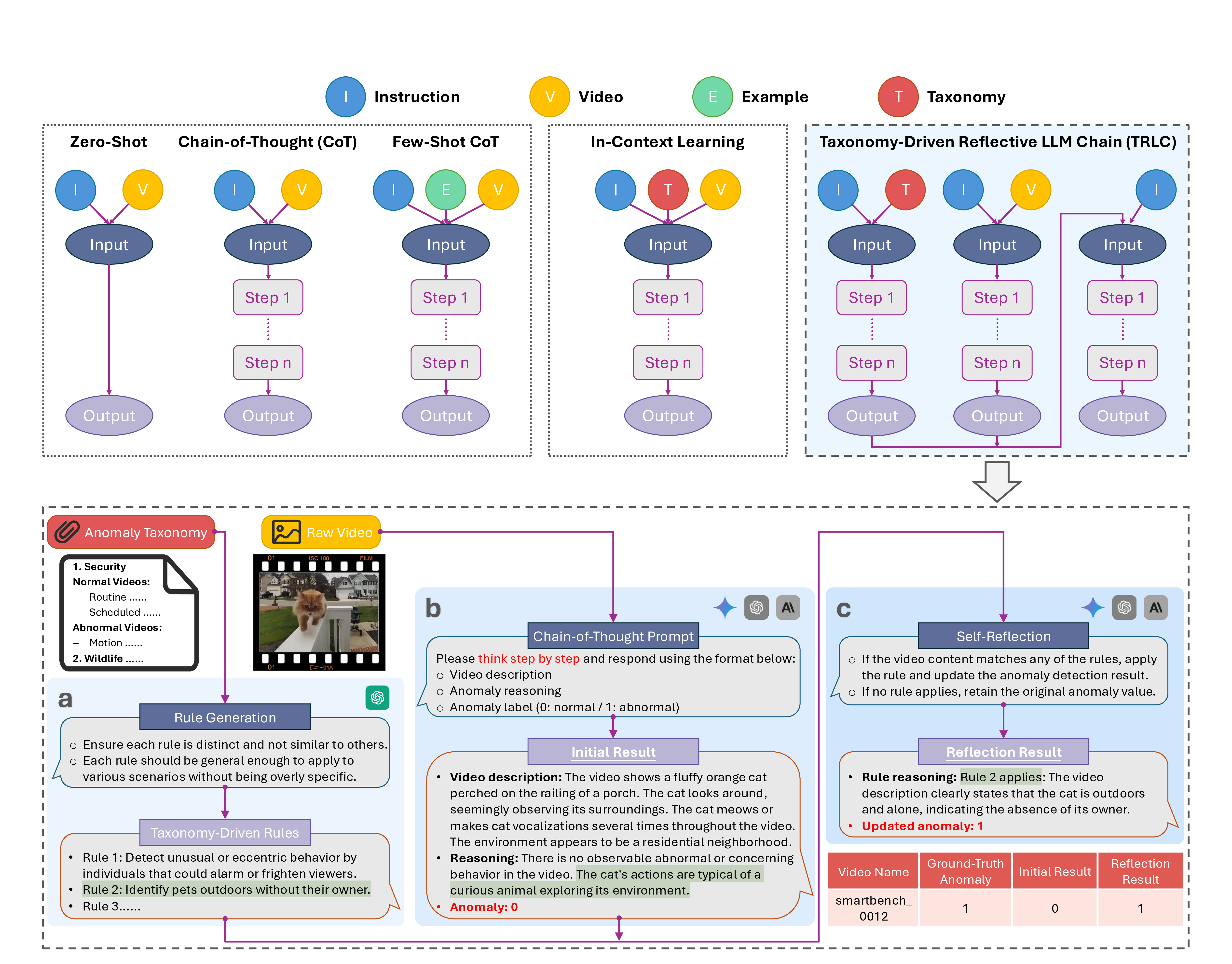

IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) Workshop, 2025

We present SmartHome-Bench, a large-scale benchmark tailored to smart home video anomaly detection, revealing substantial gaps in current multimodal LLM capabilities. To address this, we propose TRLC, a taxonomy-driven agentic workflow that improves detection accuracy by 11.62%.

Meet my furry friend, Jupyter, born in Spokane, WA in 2025 and named after Jupyter Notebook. You can find more of him on Instagram.

Chinese painting has been one of my favorite hobbies for years. It gives me a slower, more reflective space that nicely balances the pace of research.